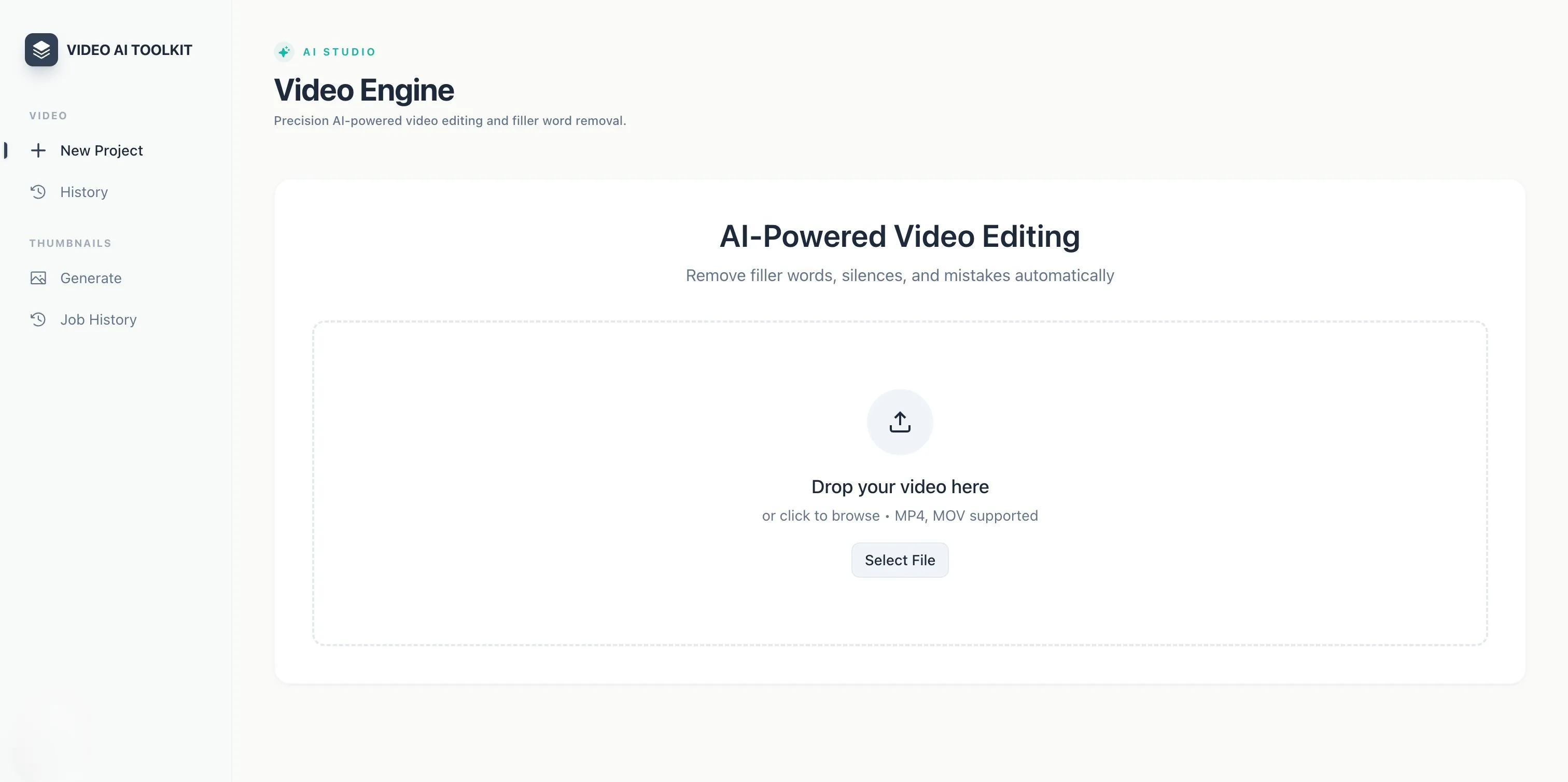

AI Video Editor & Thumbnail Generator

The team spent 30–60 minutes per video on mechanical post-production - manually cutting every filler word, long pause, and redo section.

Project Overview

An internal AI tool built for a US-based marketing agency. It automatically cleans up recorded videos by detecting filler words, long silences, and re-recorded sections - then lets the team review suggested cuts before rendering. A separate module generates YouTube thumbnail variants using the creator's reference photos and brand colors.

The Challenge

The agency's team was spending 30–60 minutes per video on mechanical post-production - manually cutting every "um", long pause, and "redo that" from recordings. Thumbnail creation added more time on top. The goal was to eliminate that busywork without removing editorial control.

My Role and Contributions

Designed and built the full system end-to-end: the Python backend, AI pipeline, FFmpeg rendering logic, background job architecture, and the React frontend. Made all architecture and model selection decisions.

What I Built

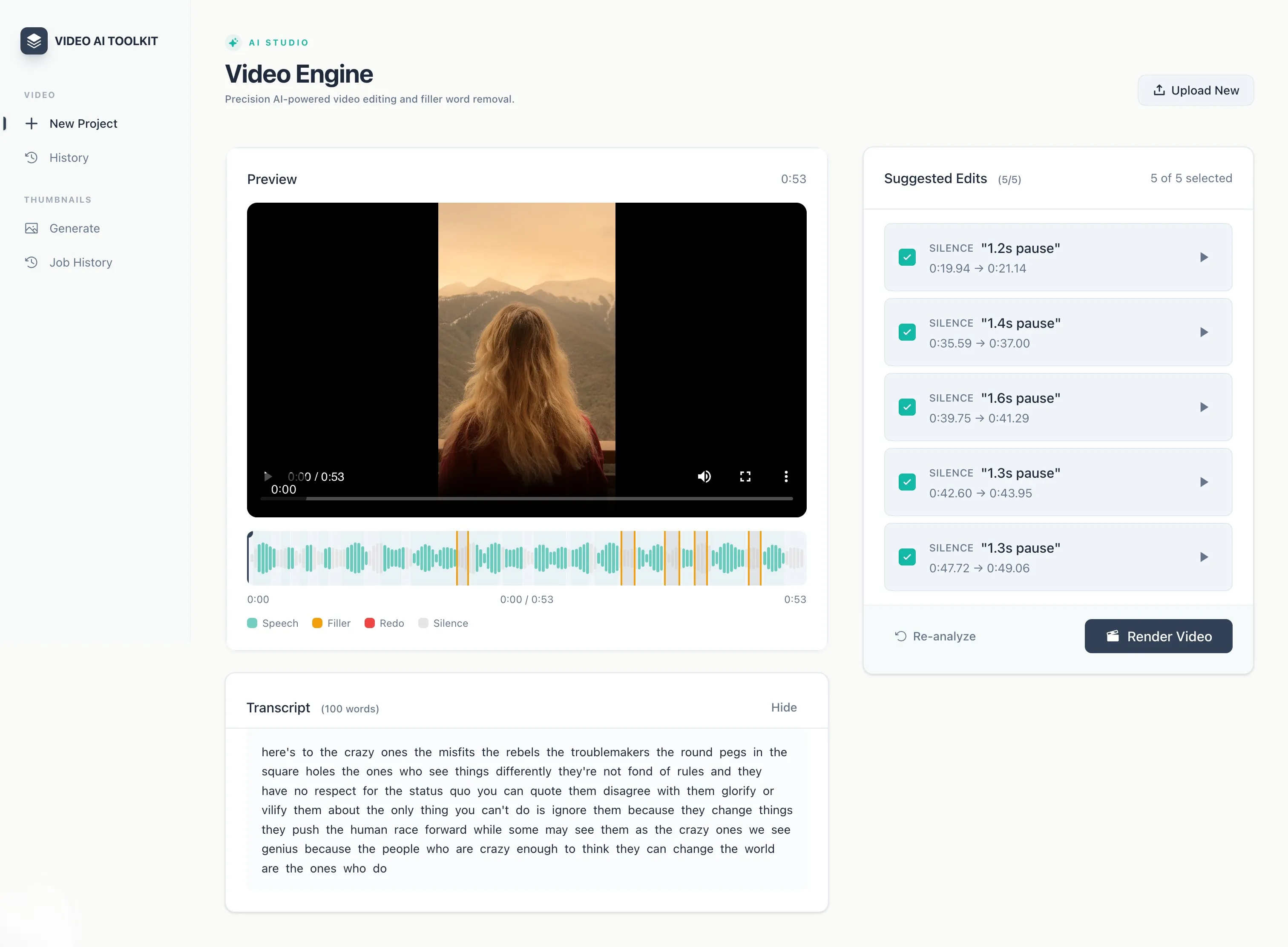

- Transcription + filler detection - Deepgram Nova-2 transcribes uploads with word-level timestamps and filler word preservation; GPT-4o reads the structured transcript to flag um, uh, long silences (>1.5s), and full redo sections triggered by phrases like "cut cut" or "start over"

- Human review before render - filler cuts are auto-approved; redo section cuts require manual confirmation; nothing is rendered until the editor signs off

- Natural-sounding FFmpeg output - each cut point gets a context-aware silence pad (50ms mid-sentence, 80ms after comma, 150ms after full stop) plus audio crossfades to prevent clicks; the last video frame is frozen during padding to avoid black flashes

- Gemini thumbnail generation - takes up to 3 reference photos from S3, optionally researches trending styles via Gemini + Google Search, and outputs multiple 1280×720 thumbnail variations per request with enforced face realism and brand color usage

- Background job queue - all heavy operations (transcription, analysis, rendering) run as Celery tasks on Redis, separate from the API; frontend receives real-time progress via SSE streaming

- Full job history - every job persisted in PostgreSQL with status and output references; files stored in S3 with pre-signed URLs

Key Engineering Decisions

- Two-model split - Deepgram handles transcription (fast, filler-word-aware); GPT-4o handles reasoning (context-aware cut detection). Cleaner than asking one model to do both.

-

GPT-4o at temperature 0.1 with forced JSON - the prompt

explicitly excludes common transition words from the filler list. Low

temperature plus

json_objectresponse format makes output stable and directly parseable. - Context-aware pause durations - rather than a fixed gap at every cut, the renderer checks punctuation on the word before each cut and injects proportional silence. Makes the output sound like natural speech.

- Celery over inline async - rendering and transcription can run for minutes. Background tasks keep the API responsive and make the system restartable if a worker crashes.

Outcomes

Compresses the mechanical post-production step - filler removal, silence cleanup, redo detection - into a single upload and a review pass. Thumbnail creation goes from a design session to a few minutes of picking between AI-generated options.

Technologies Used

- Backend: Python, FastAPI, Celery, Redis

- AI - Transcription: Deepgram Nova-2

- AI - Analysis: OpenAI GPT-4o

- AI - Thumbnails: Google Gemini 2.5 Flash Image

- Video Rendering: FFmpeg, Pillow (PIL)

- Database: PostgreSQL, SQLAlchemy, Alembic

- Storage: AWS S3

- Frontend: React, TypeScript, TanStack Query

- Infrastructure: Docker, Docker Compose

Worksheet Translation Platform

Built a multi-tenant SaaS platform where teachers upload a PDF and receive a fully translated, layout-preserved version in minutes - no reformatting required.

Konster

Developed a service marketplace mobile app for browsing, requesting, and hiring contractors.